XMC™ Forum Discussions

XMC™

First time xmc user here. Installed Dave 4 and connected an xmc 2go to a usb port.Went through XMC 2Go Initial start-up Guide but I could not get HTer...

Show More

First time xmc user here. Installed Dave 4 and connected an xmc 2go to a usb port.

Went through XMC 2Go Initial start-up Guide but I could not get HTerm to communicate with the XMC, the only port available is COM1.

Started Jlink V5.10l and it reported no emulators connected via USB.

Did I miss any drivers to install? I am using Windows 7 64-bit. Thanks for the help. Show Less

Went through XMC 2Go Initial start-up Guide but I could not get HTerm to communicate with the XMC, the only port available is COM1.

Started Jlink V5.10l and it reported no emulators connected via USB.

Did I miss any drivers to install? I am using Windows 7 64-bit. Thanks for the help. Show Less

XMC™

Hi,I just got the kit and assumed the kit is able to perform the web server function without any code change. The LEDs on the board are working proper...

Show More

Hi,

I just got the kit and assumed the kit is able to perform the web server function without any code change. The LEDs on the board are working properly but the web server is not.

The PC side is configured with the IP address of 192.168.0.11. and I assume the kit is with 192.168.0.10.

The cable is a cross one. With "Ping 192.168.0.10" in command line, I got:

" Reply from 192.168.0.11: Destination host unreachable."

Please let me know if my assumption is not correct or I did something wrong.

If the original code in the relax kit is for web server, where can I download its DAVE project files?

Comparing with XMC4500 relax kit, XMC1300 boot kit has much more support documents, getting started files, example codes.

Thanks,

Ming Show Less

I just got the kit and assumed the kit is able to perform the web server function without any code change. The LEDs on the board are working properly but the web server is not.

The PC side is configured with the IP address of 192.168.0.11. and I assume the kit is with 192.168.0.10.

The cable is a cross one. With "Ping 192.168.0.10" in command line, I got:

" Reply from 192.168.0.11: Destination host unreachable."

Please let me know if my assumption is not correct or I did something wrong.

If the original code in the relax kit is for web server, where can I download its DAVE project files?

Comparing with XMC4500 relax kit, XMC1300 boot kit has much more support documents, getting started files, example codes.

Thanks,

Ming Show Less

XMC™

Hi all,Summary: Poor DNL, INL for VADC module. Oversampling improves things, but the ENOB is still very low for 12-bit configuration on XMC4400. It is...

Show More

Hi all,

Summary: Poor DNL, INL for VADC module. Oversampling improves things, but the ENOB is still very low for 12-bit configuration on XMC4400. It is possible that startup calibration is not happening as the program that checks for the "CAL" bit (indicating calibration process) doesn't seem to get set (I've tried checking it with a "while" loop - see ADC AI.H004 errata notes). The purpose of the question is to hopefully narrow down the root causes of such behaviour.

I am currently working with an ADC module in an attempt to better understand its properties. As of now, I am getting very inaccurate results, i.e. really big DNL and INL, quite low ENOB. One of the few non-obvious things I did was connecting GND to VAGND, which almost halved DNL, but it's still showing a very high value. I am using XMC4400 kit; sampling at 400 kHz with 4x oversampling and averaging. At this point its not quite clear to me whether the problem is within the software or are there any hardware setup that I am doing wrong. Could anyone please suggest a possible explanation as to why the accuracy might be so poor? Thank you!

As an additional question that's related to the topic - do I need to connect a supply to the VAREF pin to provide a voltage reference if my package has distinct VAREF and VDDA pins?

A small update: I've tried waiting for the ARBCFG.CAL bit to be set to '1' - as suggested in errata application notes - in order to ensure proper calibration. I am only using group #0, hence I inserted the following code into xmc_vadc.c/XMC_VADC_GLOBAL_StartupCalibration() API:

What I think it should be doing is watching the CAL bit for the particular group that I am working with - and waiting until it becomes '1', meaning that the calibration process has started. However, the program seems to be stuck in that loop; and in debug mode, manually setting GLOBCFG.SUCAL bit doesn't trigger the startup calibration. Could you please - if possible, of course 🙂 - provide me with some insight as to why this code is not working as expected.

And one more thing I would like to address - when I did the oversampling, I used both SW and HW options (i.e. sampling very fast or accumulating results by "turning on" this function). In particular, I used queue oversampling with FIFO results storage. My expectations were that if I put the same channel in the queue 8 times and make it so that the first conversion is triggered by the CCU status bit signal. If the "refill" option is enabled, then all 8 conversions would occur sequentially, after the trigger, and then placed back in the queue, awaiting for the next "round". The timer was configured to 200 kHz for the sampling rate to also be 200 kHz. I've checked the conversion times against the sampling period and it seems like there's plenty of time to do all 8. However, when I sampled the waveform (sawtooth), I found that you would have to adjust the input frequency because the sampling actually happened at 66.7 kHz! Could that be that after the refill the conversion requests don't wait for the trigger and just start right away?

All the best,

Andrey Show Less

Summary: Poor DNL, INL for VADC module. Oversampling improves things, but the ENOB is still very low for 12-bit configuration on XMC4400. It is possible that startup calibration is not happening as the program that checks for the "CAL" bit (indicating calibration process) doesn't seem to get set (I've tried checking it with a "while" loop - see ADC AI.H004 errata notes). The purpose of the question is to hopefully narrow down the root causes of such behaviour.

I am currently working with an ADC module in an attempt to better understand its properties. As of now, I am getting very inaccurate results, i.e. really big DNL and INL, quite low ENOB. One of the few non-obvious things I did was connecting GND to VAGND, which almost halved DNL, but it's still showing a very high value. I am using XMC4400 kit; sampling at 400 kHz with 4x oversampling and averaging. At this point its not quite clear to me whether the problem is within the software or are there any hardware setup that I am doing wrong. Could anyone please suggest a possible explanation as to why the accuracy might be so poor? Thank you!

As an additional question that's related to the topic - do I need to connect a supply to the VAREF pin to provide a voltage reference if my package has distinct VAREF and VDDA pins?

A small update: I've tried waiting for the ARBCFG.CAL bit to be set to '1' - as suggested in errata application notes - in order to ensure proper calibration. I am only using group #0, hence I inserted the following code into xmc_vadc.c/XMC_VADC_GLOBAL_StartupCalibration() API:

XMC_ASSERT("XMC_VADC_GLOBAL_StartupCalibration:Wrong Module Pointer", (global_ptr == VADC))

global_ptr->GLOBCFG |= (uint32_t)VADC_GLOBCFG_SUCAL_Msk;

/* Inserted the code below to comply with errata recommendations */

while(!(VADC_G0->ARBCFG & VADC_G_ARBCFG_CAL_Msk)){

__asm(" nop"); // Wait for the bit to be set to 1

}What I think it should be doing is watching the CAL bit for the particular group that I am working with - and waiting until it becomes '1', meaning that the calibration process has started. However, the program seems to be stuck in that loop; and in debug mode, manually setting GLOBCFG.SUCAL bit doesn't trigger the startup calibration. Could you please - if possible, of course 🙂 - provide me with some insight as to why this code is not working as expected.

And one more thing I would like to address - when I did the oversampling, I used both SW and HW options (i.e. sampling very fast or accumulating results by "turning on" this function). In particular, I used queue oversampling with FIFO results storage. My expectations were that if I put the same channel in the queue 8 times and make it so that the first conversion is triggered by the CCU status bit signal. If the "refill" option is enabled, then all 8 conversions would occur sequentially, after the trigger, and then placed back in the queue, awaiting for the next "round". The timer was configured to 200 kHz for the sampling rate to also be 200 kHz. I've checked the conversion times against the sampling period and it seems like there's plenty of time to do all 8. However, when I sampled the waveform (sawtooth), I found that you would have to adjust the input frequency because the sampling actually happened at 66.7 kHz! Could that be that after the refill the conversion requests don't wait for the trigger and just start right away?

All the best,

Andrey Show Less

XMC™

Hi folks,I have been experimenting a lot with the XMC4500 recently and stumbled upon something very confusing.Apparently, there the FLASH0_FCON.IDLE b...

Show More

Hi folks,

I have been experimenting a lot with the XMC4500 recently and stumbled upon something very confusing.

Apparently, there the FLASH0_FCON.IDLE bit has a weird and unexpected performance impact.

In the attached DAVE v4.1.4 project the following code is executed from uncached PMU FLASH at 0x0c002000:

At the end of each measurement I compute the elapsed time be means of the systick register.

For the above code snippet I observe the following execution times:

FLASH0_FCON.IDLE = 0:

- WS=3 => 722 cycles

- WS=4 => 808 cycles

- WS=5 => 861 cycles

- WS=6 => 914 cycles

- WS=7 => 968 cycles

- WS=8 => 1022 cycles

So with increasing PFLASH wait states the execution times are higher, which is of course expected.

If I disable the static prefetching, I observe the following execution times:

FLASH0_FCON.IDLE = 1:

- WS=3 => 755 cycles

- WS=4 => 704 cycles

- WS=5 => 716 cycles

- WS=6 => 728 cycles

- WS=7 => 757 cycles

- WS=8 => 789 cycles

Here, execution times are always lower than with enabled static prefetching, even though mostly linear code is executed (except for the single branch every three FLASH pages).

The only exception is when 3 wait states are being configured.

How can this be explained? Why is 4 wait states, disabled static prefetch about 100 cycles than 3 wait states with enabled static prefetch?

Attached is my project.

To reproduce you can do "Run to Line" framework.S:88 (and adjust the hardware settings accordingly).

Show Less

I have been experimenting a lot with the XMC4500 recently and stumbled upon something very confusing.

Apparently, there the FLASH0_FCON.IDLE bit has a weird and unexpected performance impact.

In the attached DAVE v4.1.4 project the following code is executed from uncached PMU FLASH at 0x0c002000:

movs r7, #10

nop

nop

nop

nop

nop

nop

nop

nop

nop

nop

nop

nop

nop

nop

nop

loop:

nop

nop

nop

nop

nop

nop

nop

nop

nop

nop

nop

nop

nop

nop

nop

nop

nop

nop

nop

nop

nop

nop

nop

nop

nop

nop

nop

nop

nop

nop

nop

nop

sub r7, #1

cmp r7, #0

bne loop

nop

nop

nop

nop

bx lr

At the end of each measurement I compute the elapsed time be means of the systick register.

For the above code snippet I observe the following execution times:

FLASH0_FCON.IDLE = 0:

- WS=3 => 722 cycles

- WS=4 => 808 cycles

- WS=5 => 861 cycles

- WS=6 => 914 cycles

- WS=7 => 968 cycles

- WS=8 => 1022 cycles

So with increasing PFLASH wait states the execution times are higher, which is of course expected.

If I disable the static prefetching, I observe the following execution times:

FLASH0_FCON.IDLE = 1:

- WS=3 => 755 cycles

- WS=4 => 704 cycles

- WS=5 => 716 cycles

- WS=6 => 728 cycles

- WS=7 => 757 cycles

- WS=8 => 789 cycles

Here, execution times are always lower than with enabled static prefetching, even though mostly linear code is executed (except for the single branch every three FLASH pages).

The only exception is when 3 wait states are being configured.

How can this be explained? Why is 4 wait states, disabled static prefetch about 100 cycles than 3 wait states with enabled static prefetch?

Attached is my project.

To reproduce you can do "Run to Line" framework.S:88 (and adjust the hardware settings accordingly).

Show Less

XMC™

Hello,I am working on a project where I need eight (four PWM signals with complementary signals) phase shifted. I'm using a Dual Active Bridge which i...

Show More

Hello,

I am working on a project where I need eight (four PWM signals with complementary signals) phase shifted. I'm using a Dual Active Bridge which is more or less two phase shifted full bridges combined.

Right now I am working with a microcontroller from another company. I am able to generate the signals but there is unfortunately a problem with the PWM generation which is not really fixable. So I was looking for another microcontroller.

I saw the XMC4500 with its CCU8 and I was interested to test it. I bought the XMC4500 Relax Kit and now I'm trying to generate the signals. But unfortuanetely the two CCU8 are not enough to generate the signals. Am I overlooking something? Is it not possible to generate the eight PWM signals?

Best regards,

Milad Show Less

I am working on a project where I need eight (four PWM signals with complementary signals) phase shifted. I'm using a Dual Active Bridge which is more or less two phase shifted full bridges combined.

Right now I am working with a microcontroller from another company. I am able to generate the signals but there is unfortunately a problem with the PWM generation which is not really fixable. So I was looking for another microcontroller.

I saw the XMC4500 with its CCU8 and I was interested to test it. I bought the XMC4500 Relax Kit and now I'm trying to generate the signals. But unfortuanetely the two CCU8 are not enough to generate the signals. Am I overlooking something? Is it not possible to generate the eight PWM signals?

Best regards,

Milad Show Less

XMC™

Hi,I am developing an appliance that needs to do some reasonable time-keeping and have been experimenting with the various timers available on the XMC...

Show More

Hi,

I am developing an appliance that needs to do some reasonable time-keeping and have been experimenting with the various timers available on the XMC4500.

While debugging very weird behaviour (that I've fixed now; turns out I was doing too much work in an interrupt) of a CCU-based timer I happened upon something unrelated: I have an interrupt tied to the CCU Timer and increment a ccu_ticks variable in the interrupt. In the main loop, I calculate the time passed based on the ccu_ticks and from the RTC.

If I enable the CCU clock in sleep state and call __WFI in the main loop to make the CPU go to sleep, the CCU and RTC clock very visibly drift against each other. When I don't call __WFI, they seem to keep step very well. Is there any explanation for this? Does it take the CPU too long to wake up from sleep when the CCU interrupt is hit?

Here is the main code:

I attached the project. It includes Apps and code for displaying CCU time, RTC time, and the drift on a standard 16x2 character display. That code can be cut out if you don't have a test board with a character lcd handy. Show Less

I am developing an appliance that needs to do some reasonable time-keeping and have been experimenting with the various timers available on the XMC4500.

While debugging very weird behaviour (that I've fixed now; turns out I was doing too much work in an interrupt) of a CCU-based timer I happened upon something unrelated: I have an interrupt tied to the CCU Timer and increment a ccu_ticks variable in the interrupt. In the main loop, I calculate the time passed based on the ccu_ticks and from the RTC.

If I enable the CCU clock in sleep state and call __WFI in the main loop to make the CPU go to sleep, the CCU and RTC clock very visibly drift against each other. When I don't call __WFI, they seem to keep step very well. Is there any explanation for this? Does it take the CPU too long to wake up from sleep when the CCU interrupt is hit?

Here is the main code:

uint32_t ccu_ticks = 0;

void CCU_Timer()

{

ccu_ticks++;

}

int main(void)

{

/* DAVE init cut out */

// Enable CCU clock in sleep

SCU_CLK->SLEEPCR |= SCU_CLK_SLEEPCR_CCUCR_Msk;

uint8_t is_initial = 1;

int32_t initial_offset = 0x1337;

while(1U)

{

XMC_RTC_TIME_t rtc_timeval;

uint32_t ccu_time;

uint32_t rtc_time;

RTC_GetTime(&rtc_timeval);

// ccu_tick increments every 100ms, so divide by ten to get seconds

ccu_time = (ccu_ticks / 10);

// get high number of rtc seconds

rtc_time = rtc_timeval.hours * 60 * 60 + rtc_timeval.minutes * 60 + rtc_timeval.seconds;

int32_t diff = ((int32_t)rtc_time - (int32_t)ccu_time);

if (is_initial) {

initial_offset = diff;

is_initial = 0;

} else {

//this should not be hit for a long time

if ((diff - initial_offset) < -2 || (diff - initial_offset) > 2) {

while (1U)

{

}

}

}

__WFI();

}

}

I attached the project. It includes Apps and code for displaying CCU time, RTC time, and the drift on a standard 16x2 character display. That code can be cut out if you don't have a test board with a character lcd handy. Show Less

XMC™

Hello,I'm planning to implement a Firmware over the air updating mechanism on an XMC device.The file shall be downloaded via cell network and stored i...

Show More

Hello,

I'm planning to implement a Firmware over the air updating mechanism on an XMC device.

The file shall be downloaded via cell network and stored in an external flash memory.

After that, a bootloader shall replace the previous application.

So I have the following questions:

* How should internal flash be segmented, so that the bootloader resides in a protected area?

* Location of ISR addresses?

* What kind of file type should the compiler generate, so that it can be transfered in internal code flash?

* Any app notes/code examples describing a similiar situation?

Thanks in advance for any suggestions!

George Show Less

I'm planning to implement a Firmware over the air updating mechanism on an XMC device.

The file shall be downloaded via cell network and stored in an external flash memory.

After that, a bootloader shall replace the previous application.

So I have the following questions:

* How should internal flash be segmented, so that the bootloader resides in a protected area?

* Location of ISR addresses?

* What kind of file type should the compiler generate, so that it can be transfered in internal code flash?

* Any app notes/code examples describing a similiar situation?

Thanks in advance for any suggestions!

George Show Less

XMC™

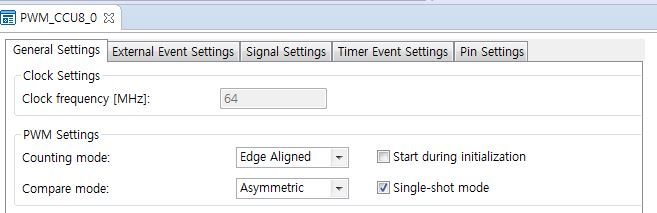

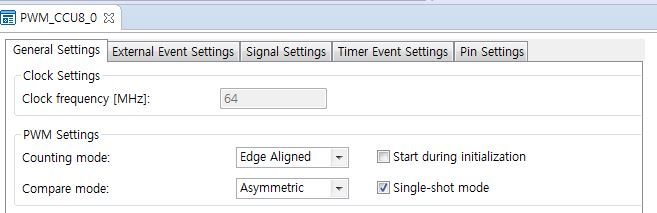

Hi~~Board : XMC1302 boot kit DAVE ver : DAVE4.0.0I tried to make PWM_CCU8 single-shot mode function enablestep1 : single-shot mode -> change ->step2 :...

Show More

Hi~~

Board : XMC1302 boot kit

DAVE ver : DAVE4.0.0

I tried to make PWM_CCU8 single-shot mode function enable

step1 : single-shot mode -> change ->step2 : normal PWM mode

Is there any way possible to the normal PWM mode from the single-shot mode?

Please answer fast.

Thanks Show Less

Board : XMC1302 boot kit

DAVE ver : DAVE4.0.0

I tried to make PWM_CCU8 single-shot mode function enable

step1 : single-shot mode -> change ->step2 : normal PWM mode

Is there any way possible to the normal PWM mode from the single-shot mode?

Please answer fast.

Thanks Show Less

XMC™

Hi,I have a 320x240 18 bit RGB LCD (PH320240T-009-IC1Q) that I am trying to control with XMC4500. Reading the documentation available, I understand th...

Show More

Hi,

I have a 320x240 18 bit RGB LCD (PH320240T-009-IC1Q) that I am trying to control with XMC4500.

Reading the documentation available, I understand that I should use an SDRAM to store the raw data that will be displayed in the LCD. Considering that I have 18 bits per pixel I assume that I will need a 32-bit SDRAM.

But in the XMC4500 reference manual, see that EBU only supports 16 bit SDRAM.

So would you say that this LCD is incompatible with the XMC4500? There is some kind of workaround that I can do to overcome this? Show Less

I have a 320x240 18 bit RGB LCD (PH320240T-009-IC1Q) that I am trying to control with XMC4500.

Reading the documentation available, I understand that I should use an SDRAM to store the raw data that will be displayed in the LCD. Considering that I have 18 bits per pixel I assume that I will need a 32-bit SDRAM.

But in the XMC4500 reference manual, see that EBU only supports 16 bit SDRAM.

So would you say that this LCD is incompatible with the XMC4500? There is some kind of workaround that I can do to overcome this? Show Less

XMC™

Hi all,I have an XMC4400 evaluation board. At some point in my program I declare an array that is big enough to occupy memory space on either side of ...

Show More

Hi all,

I have an XMC4400 evaluation board. At some point in my program I declare an array that is big enough to occupy memory space on either side of the PSRAM and DSRAM1 boundary (see image below).

Below is a snippet from the ".map" file that shows that indeed the array has one of its members on the border.

The linker script defines the following region that crosses the boundary between the regions; this is where the ".bss" data is placed, too, as per linker script.

However, when the array is allocated memory space during the startup, upon crossing the boundary between memory regions (unaligned, too, as the offending address shown in BFAR register is 0x1FFF FFFD), the program generates a Bus Fault. Could you please help me figure out a way to go about this issue?

Thank you!

Regards,

Andrey Show Less

I have an XMC4400 evaluation board. At some point in my program I declare an array that is big enough to occupy memory space on either side of the PSRAM and DSRAM1 boundary (see image below).

Below is a snippet from the ".map" file that shows that indeed the array has one of its members on the border.

/* Map file snippet */

.bss 0x1fffc898 0x4009 load address 0x0c001eb4

0x1fffc898 . = ALIGN (0x4)

0x1fffc898 __bss_start = .

*(.bss)

*(.bss*)

.bss.resultsArray

0x1fffc898 0x4000 ./main.o

0x1fffc898 resultsArray

.bss.resultsArrayIndex

0x20000898 0x2 ./main.o

0x20000898 resultsArrayIndex

*fill* 0x2000089a 0x2

The linker script defines the following region that crosses the boundary between the regions; this is where the ".bss" data is placed, too, as per linker script.

/* Linker script snippet */

SRAM_combined(!RX) : ORIGIN = 0x1FFFC000, LENGTH = 0x14000

/* Some linker script code here */

/* BSS section */

.bss (NOLOAD) :

{

. = ALIGN(4);

__bss_start = .;

* (.bss);

* (.bss*);

* (COMMON);

*(.gnu.linkonce.b*)

__bss_end = .;

} > SRAM_combined

__bss_size = __bss_end - __bss_start;

However, when the array is allocated memory space during the startup, upon crossing the boundary between memory regions (unaligned, too, as the offending address shown in BFAR register is 0x1FFF FFFD), the program generates a Bus Fault. Could you please help me figure out a way to go about this issue?

Thank you!

Regards,

Andrey Show Less