- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

I tried to stream 1980*1080 60fps raw 10 in cx3 and I followed this KBA "https://community.cypress.com/t5/Knowledge-Base-Articles/Streaming-RAW10-Format-Input-Data-to-16-24-...

and I got image like this

so we tried to decode this image based on the data packet mention in the above KBA .

From the above image we can see that the LSB of each bit is at 5th byte so we removed every 5th byte in a single frame and re print the image as 8 bit image but still we dint get any original image.

heart I'm sharing the raw image captured by v4l2 and image that we modified.

Original Image

Modified image

Kindly say how to decode this image into original image.

Thankyou.

Solved! Go to Solution.

- Labels:

-

USB Superspeed Peripherals

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

We currently do not have a reference host application/code that can reconstruct the original image from the packed video stream to share with you.

I can find that there is a big difference between the original image (taken with your mobile camera) and the image reconstructed from the video stream transmitted by CX3. I also find that the image reconstructed from the UVC player is almost similar in shape to the image reconstructed by following the procedure mentioned before in this thread. I feel that the original image is still not recovered from the packed video stream.

As mentioned before, CX3 transmits the data obtained from the image sensor to the host. As we make use of 24 bits to support 60fps, the data from the image sensor is packed and stored into the DMA buffers inside CX3.

A UVC header of 12 bytes is added at the start of each frame and it is used to distinguish the frames. This UVC header is not a part of the image and should not be considered while reconstructing the image from the video stream. The data that follows UVC header will be the actual frame data. So, suppose a video frame requires 100 DMA buffers, then each DMA buffer will have a 12 byte header at the start. The 12 byte header should not be considered for all the 100 buffers.

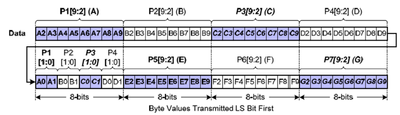

The frame data is sampled by the GPIF II block of CX3. When 24 bit output is used,

the GPIF II block samples the data as follows:

- First clock cycle - P1[9:2], P2[9:2], P3[9:2],

- Second clock cycle - P4[9:2], P1[1:0], P2[1:0], P3[1:0], P4[1:0], P5[9:2]

- Third clock cycle – and so on.

For First clock cycle - P1[9:2], P2[9:2], P3[9:2], the data is presented to the GPIF II pins as follows:

Pixel GPIFII pins

P1[2] - DQ0 LSB

P1[3] - DQ1

....

....

P3[9] - DQ23 MSB

This data will then be put in little endian format into the DMA buffers. So, the first location of DMA buffer (after UVC header) will be having P1[9:2], second location will be P2[9:2] and third location will be P3[9:2], fourth location will be P4[9:2]. The 5th location will have the LSB 2 bits of first 4 pixels. These 2 bits when merged with P1[9:2], P2[9:2], P3[9:2] and P4[9:2] will reconstruct first 4 pixels. This should be repeated for the complete video frame.

As mentioned before, currently we do not have an example host application/project to share for this. But, we have seen many customers reconstructing the original video stream by following this procedure.

Jayakrishna

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

1. Based on the KBA, when 24 bit output is used, the GPIF II block samples the data as follows:

- First clock cycle - P1[9:2], P2[9:2], P3[9:2],

- Second clock cycle - P4[9:2], P1[1:0], P2[1:0], P3[1:0], P4[1:0], P5[9:2]

- Third clock cycle – and so on.

2. For First clock cycle - P1[9:2], P2[9:2], P3[9:2], the data is presented to the GPIF II pins as follows:

Pixel GPIFII pins

P1[2] - DQ0 LSB

P1[3] - DQ1

....

....

P3[9] - DQ23 MSB

This data will then be put in little endian format into the DMA buffers.

3. This process will be repeated by the GPIF II block for all the clock cycles.

CX3 will add a 12 byte UVC header at the start of each DMA buffer. This gives information on the End of frame. The data that follows after this UVC header will be the frame data that was sampled and stored into the DMA buffers by the GPIF II block.

Jayakrishna

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

we are trying to decode the image based on the previous reply but we dint get the correct image. what we tried is we captured the raw image and split into 8 bit packet and removing every 5th packet and replot the image in 1920x1080 format.

kindly say how to decode this single frame to obtain the image.

And say that, is there any way to change the data packing format or to confirm the data packing format

Thankyou

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

If the 5th packet is removed, then you will loose data. As per your configuration, each pixel is of 10bits. If the 5th packet is removed, then all the pixels will be represented by 8 bits only.

The data is packed and stored in CX3 as per my previous response. Basically, you need to combine the bits from packet 5 and packet 1 to reconstruct the first pixel. This procedure should be used for all the pixels to reconstruct the image. While doing this reconstruction, the data ordering mentioned in my previous response should also be considered.

Jayakrishna

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

>> removing 5th byte

the basic function of converting an 10bit to 8 bit is by dividing that value into 4, which is shifting 2 bit right so if we remove that 5th byte it just act as an 8 bit conversion so there will be an 8 bit image instead of 10 bit image.

So removing 5th byte will not affect the image structure.

for the previous response you can see there is an large no of pixel has been shifted to right.

if possible share any image has been taken in the same 60 fps 1080p configuration and I'm sure its not packed like what you mentioned in the previous response.

Kindly check for that.

Thankyou.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

Please share snapshots of the following:

1. Original image

2. The image obtained after removing 5th packet (2 LSB bits of each bits)

3. The image obtained after reconstruction of the image based on the procedure mentioned in my previous response

Also, please refer to the following community thread where another customer followed the same procedure to reconstruct the image:

https://community.cypress.com/t5/USB-Superspeed-Peripherals/Raw10-to-UVC-using-24bit-bus/m-p/166713

As mentioned in the thread, the customer was able to reconstruct the original image using the same procedure mentioned in my previous response.

Jayakrishna

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

Hear I'm sharing the images that you mentioned previously,

Original view taken from my mobile camera

The image modified by us (8 bit image by removing 5th byte)

Image modified based on your Previous response (reconstructed 5th bit for previous 4 bit )

Image captured using normal camera app

original image captured using the 1080p 30fps mode.

find the Matlab code in attachments that we used to decode this image,

If possible kindly share any form of code like python, c, cpp , java etc. To decode this image which will be helpful for us to develop the custom application to view this image.

Thankyou

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

We currently do not have a reference host application/code that can reconstruct the original image from the packed video stream to share with you.

I can find that there is a big difference between the original image (taken with your mobile camera) and the image reconstructed from the video stream transmitted by CX3. I also find that the image reconstructed from the UVC player is almost similar in shape to the image reconstructed by following the procedure mentioned before in this thread. I feel that the original image is still not recovered from the packed video stream.

As mentioned before, CX3 transmits the data obtained from the image sensor to the host. As we make use of 24 bits to support 60fps, the data from the image sensor is packed and stored into the DMA buffers inside CX3.

A UVC header of 12 bytes is added at the start of each frame and it is used to distinguish the frames. This UVC header is not a part of the image and should not be considered while reconstructing the image from the video stream. The data that follows UVC header will be the actual frame data. So, suppose a video frame requires 100 DMA buffers, then each DMA buffer will have a 12 byte header at the start. The 12 byte header should not be considered for all the 100 buffers.

The frame data is sampled by the GPIF II block of CX3. When 24 bit output is used,

the GPIF II block samples the data as follows:

- First clock cycle - P1[9:2], P2[9:2], P3[9:2],

- Second clock cycle - P4[9:2], P1[1:0], P2[1:0], P3[1:0], P4[1:0], P5[9:2]

- Third clock cycle – and so on.

For First clock cycle - P1[9:2], P2[9:2], P3[9:2], the data is presented to the GPIF II pins as follows:

Pixel GPIFII pins

P1[2] - DQ0 LSB

P1[3] - DQ1

....

....

P3[9] - DQ23 MSB

This data will then be put in little endian format into the DMA buffers. So, the first location of DMA buffer (after UVC header) will be having P1[9:2], second location will be P2[9:2] and third location will be P3[9:2], fourth location will be P4[9:2]. The 5th location will have the LSB 2 bits of first 4 pixels. These 2 bits when merged with P1[9:2], P2[9:2], P3[9:2] and P4[9:2] will reconstruct first 4 pixels. This should be repeated for the complete video frame.

As mentioned before, currently we do not have an example host application/project to share for this. But, we have seen many customers reconstructing the original video stream by following this procedure.

Jayakrishna